Personal Knowledge Management Handbook

Author: C. Sean Burns

Date: 2025-06-13

Email: sean.burns@uky.edu

Website: cseanburns.net

GitHub: @cseanburns

This short book was written and developed for a course on personal knowledge management taught at the University of Kentucky's School of Information Science.

How to Use this Book

Text and Video

In the course I teach, sections of this book will be accompanied by a video demonstrating the practices described in those sections. Try to read through the text first, and then watch the video. Revisit the text to help cement the ideas in place.

The video demonstrations are available to students in my Personal Knowledge Management course.

Additional Readings

Each section includes links and references for additional reading. Students are encouraged to open these links and study the additional readings.

Markup

There are two markups that I want to bring to your attention:

Text in code blocks indicate a command that you should test for yourself. For example, I demonstrate search queries in the sections that follow. When I demonstrate a Google search, for example, the search query will appear in a standalone box with a basic font and a different color background (depending on the theme you're using).

basketball uky

I occasionally insert some asides into the text. These asides generally contain notes or extra comments about the main content. Asides look like this:

This is an aside. Asides will contain extra information, notes, or comments.

Theme

At the top of the page is an icon of a paint brush. The default theme is darker text on a light background, but you may change the theme per your preferences.

Printing

I intend this book to be a live document, and therefore it'll be regularly updated. But feel free to print it, if you want. You can use the print function to save the entire book as a PDF file. See the printer icon at the top right of the screen to get started.

If you're familiar with git and GitHub, you can also fork the repository for this book at

https://github.com/cseanburns/personal_knowledge_mgmt.

About

Note that this work is licensed under the GNU Free Documentation License. See the licensing page for more details.

This book was generated using mdbook.

Copyright (C) 2022 C. Sean Burns

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.3 or any later version published by the Free Software Foundation; with no Invariant Sections, no Front-Cover Texts, and no Back-Cover Texts. A copy of the license is included in the section entitled "GNU Free Documentation License".

Introduction to Personal Knowledge Management

What is personal knowledge management (PKM)?

What is personal knowledge management (PKM)? Well, I'd hazard a guess that we depend largely on two main sources to search for, locate, and retrieve information, either daily or for more extensive work. These two sources are the people we know and the search engines we use on a daily basis, like Google. Relying on these two kinds of sources can get us by for many things. At times, though, we will require more rigorous sources because the task at hand requires better information or the risks involved in making a decision are weighty enough to require more certainty. Good, thorough information may reduce our uncertainty or enable us to measure levels of uncertainty, and this is helpful when making decisions, writing papers, completing projects, and working at our first major job. Therefore, in this book our first learning goal is focused on information sources, and specifically to:

- become aware of the variety of information sources that exist,

- learn how to search those sources for relevant information, and

- learn how to evaluate the relevant information that we find.

This covers part of what we mean by personal knowledge management. In addition to being able to be aware of, to locate, and to evaluate information, it is also important to manage that information and to develop good workflows that help with that. What I mean is that, it can be great that we know that UK Libraries exists and that we can use it to locate good information, but if we don't use the library or other great resources available to us because we're not in the habit, then what's the point of all this good information? And what's the point in having access to all these information technologies to become informed, to do good things, and to make our lives better and easier?

I want to live in a good information age rather than merely an information age. Therefore, our second learning goal is focused on the tools and technologies that will help us build personalized information and knowledge workflows. As stated above, often the point of acquiring good information is to do some task or to make a decision. A good workflow is good if it fits our style, or our way of getting things done, because that makes the workflow more likely we'll adopt the workflow and stick with it. A good workflow also maximizes our use of good sources of information and our desired outcomes, like a paper for a class, a project for a boss, or a decision that involves some uncertainty.

Our second learning goal is, therefore, focused on personal knowledge workflows, and specifically, to:

- become aware of the tools and technologies designed to manage personal knowledge workflows,

- learn how to use these tools and technologies, and

- incorporate these tools and technologies into our personal knowledge workflows

Our final learning goal focuses on outcomes and builds off the first two goals. Specifically, our final goal is to develop habits using the tools and technologies that incorporate a variety of good sources of information to accomplish a task or make a decision. That is, we want to take advantage of the many kinds of information sources that exist, to make it a habit of using those information sources in a habitual, personalized, effective way, but in the end, we often want to accomplish some task and get things done (e.g., write a paper or create a software program) or make a decision that involves some risk (e.g., buy a house or choose a healthcare procedure). In those cases, having a personal knowledge workflow that incorporates high quality sources of information facilitates accomplishing such tasks or making such decisions.

Topic Outline

To accomplish the three goals above, this book covers:

- the differences between information sources and resources,

- how to find new kinds of information sources and resources,

- how to use reference management software,

- how to search the web and databases, and

- how to evaluate information sources.

This book is also project based. We will use the material and tools covered here to produce content for a Wikipedia article that you have selected.

Email Management

I start this book by covering two basic topics in Chapter 2: email management and generative AI. We begin with email management because email continues to be an important means of communication, especially in professional contexts, and because our inboxes can easily become overwhelming. As a result, we can miss out on important information when our inboxes are overloaded. Therefore, we will discuss ways to handle email effectively.

Generative AI

I wrote the first draft of this book during the summer of 2022, just a few months before the public release of ChatGPT and later Google's Bard (now Gemini). ChatGPT, Gemini, Claude, and other AI chatbots are designed to have human-like conversations. Each are super useful, interesting, and may even be fun to use. Each can produce papers, code, and help us understand more about a variety of topics and issues. To make my point, I asked ChatGPT and Gemini about what they do. Here is what ChatGPT told me in 2026:

I’m a general-purpose AI assistant. In practice that means a few big things:

I help you think — clarifying ideas, challenging assumptions, and turning vague thoughts into something you can actually use. If you’re wrestling with a concept, an argument, a research question, or a decision, I can help you pull it apart and see what really matters.

I help you make things — writing, editing, coding, outlining, planning, designing. You can hand me a messy draft, a half-formed idea, or a goal and I’ll help turn it into something structured and workable.

I help you learn — explaining topics, building intuition, finding connections between ideas, and adjusting explanations to your level and goals rather than dumping textbook prose on you.

I help you navigate information — summarizing, comparing, checking consistency, and (when needed) pulling in up-to-date facts from the web.

And I help you reflect — journaling, thinking through trade-offs, noticing patterns in what you say and do, and nudging you when you’re drifting into blind spots or wishful thinking.

What I don’t do is replace your judgment, creativity, or responsibility. I’m best when I’m acting like a very fast, very patient thinking partner: useful, sometimes challenging, occasionally surprising, but never the one in charge.

If you want, tell me what you’re hoping to use me for — quick answers, deep thinking, writing, teaching, something else — and I’ll tune myself to that.

And here is what Gemini told me in 2026:

Think of me as your versatile, AI-powered thought partner. I’m Gemini 3 Flash, and I'm designed to help you bridge the gap between an idea and its execution—whether you’re writing, brainstorming, or creating media.

Here is a breakdown of what I can do for you:

🧠 Intellectual & Creative Support

- Synthesize & Summarize: I can take complex documents or long articles and boil them down to the essentials.

- Draft & Edit: From professional emails and resumes to creative stories and code, I can help you write from scratch or polish your existing work.

- Brainstorm: I’m great for "rubber-ducking"—talk through a problem with me, and I’ll offer new perspectives, counter-arguments, or fresh ideas.

- Analyze Data: Upload a file, and I can help you find trends, explain formulas, or visualize information.

🎨 Visual & Video Creation

I’m not just about text; I have powerful tools for generating and editing visual content:

Tool Capability Daily Limit Image Generation Create or edit high-fidelity images using the Nano Banana model. 100 uses Video Generation Generate short, high-quality videos with audio using the Veo model. 2 uses

📱 Real-Time Interaction (Gemini Live)

If you use the Gemini app on Android or iOS, we can go beyond typing:

- Natural Conversation: We can talk back and forth in real-time. You can even interrupt me!

- See What You See: You can share your camera feed so I can help you with things in your physical environment (like identifying a plant or fixing a sink).

- Screen Context: I can help with whatever is currently on your phone screen.

🛠️ Technical Details

I am currently operating in the Free tier. While I am highly capable, I do have some guardrails—for example, I won't generate images of key political figures, and I'll always aim to be factually accurate while maintaining a helpful, peer-like tone.

Would you like to try something specific right now, such as generating an image or brainstorming a project you're working on?

In my experience, I have enjoyed using these services because each can make various tasks a bit easier. But there are two things to note. First, I've learned that there's a difference between using them and using them well, just like there's a difference between entering basic search queries into Google search and using advanced search queries.

Second, do not use them as a substitute for your own thinking. I like my brain and what it can do. Activities like reading, writing, coding, and conversing are activities that help me learn and help me think, and that's good for my brain and for me. I think, therefore, I am, wrote the philosopher René Descarte several centuries ago, and we want to be wary of how much we ask AI to think for us at the risk that we stop thinking for ourselves.

Therefore, we will spend a little time with generative AI and learning how to use it well and ethically. This means we will learn how to use it augment our thinking and manage what we're learning, rather than to replace our thinking.

Remaining Lessons

In Chapters 3 and 4, we focus more on the fundamentals of PKM. In Chapter 3, we learn how to use the two major information resources: the library and the web. We also learn about reference managers, which are a lot like advanced bookmarking applications. Applications like these help us build our own collections of information sources, use those sources as needed, and are key to developing a personal knowledge workflow.

In Chapter 4, we learn the basics of information search, or information retrieval, and how it works on the web and in bibliographic databases, like we find using our library. We also cover how to recognize relevant information and then evaluate it.

In Chapter 5, we begin to focus on our final project, which is to edit a Wikipedia article. We will cover additional resources, both from the library and the web, in order to find relevant information for our chosen Wikipedia page. We will also use our reference manager to help with the project. Chapter 5 is where we work to build out our personal knowledge workflow using the tools and techniques we covered in prior chapters.

Conclusion

We live in a knowledge society. Recognizing that, the goal of this course is to become effective at using the tools to locate, evaluate, and use information in order to build personal knowledge workflows. Let's get started.

Welcome to the PKM Basics Section!

Welcome to Chapter 2, the PKM Basics section of our Personal Knowledge Management (PKM) course. In this section, we'll develop two basic skills that help manage our personal and professional knowledge effectively.

First we'll tackle Email Management. We all know that our inboxes may feel like beasts that are impossible to tame. We're going to explore strategies to organize, prioritize, and manage email more efficiently. We'll learn how to reduce clutter, deal with important messages promptly, and keep our sanity intact amidst the constant barrage of digital communication.

Second, we'll discuss the exciting (and worrisome???) world of Working with Generative AI. Generative artificial intelligence is a powerful tool. We'll look at how AI can help you to generate ideas and polish them. But AI can also get you into trouble and can certainly help you cheat on your work. Therefore, we'll discuss its ethical uses and learn to use AI to augment our own thinking and intelligence rather than replace it.

Chapter 2 is a bit of an island compared to the rest of the course. While our focus in this chapter, like the remaining chapters, is on building skills that will serve you well as students and knowledge workers, the remaining lessons covered in this work focus on building skills and working towards a final project.

In the meantime, let's get started with email and AI.

Email Management

Readings

- Strategies

- Duffy, J. (2019). 11 Tips for Managing Email More Efficiently. Retrieved 19 July 2022, from PC Mag

- Tips and Tools for Managing Email. (2021). Retrieved from Colorado College

- Outlook

- Manage email messages by using rules. Retrieved from Using Rules - Microsoft

- Manage and organize: Search. Retrieved from Search - Microsoft

- Manage and organize: Clean up Inbox. Retrieved from Clean up Inbox - Microsoft

- Flag email messages for follow up. Retrieve from Flag messages

- Gmail

- Create rules to filter your emails. Retrieved from Using Rules - Gmail

- Create labels to organize Gmail. Retrieved from Create Labels - Google

- Group emails into conversations. Retrieved from Group emails - Google

- Search in Gmail. Retrieved from Search - Gmail

- Create & manage Tasks in Google Calendar. Retrieved from Create & manage Tasks

Introduction

Many people today use a variety of social media and text messaging platforms to communicate with each other, and this is on top of, or in spite of, the existence and use of email, which still reigns as the dominant mode of communication across a variety of industries. We can refer to this experience of the abundance of communication platforms and the abundance of messaging on these platforms as communication overload. This concept basically means that there are too many messages coming at us from too many platforms.

Communication overload is the result of:

- increased channels of communication: email, texting, social media, etc

- information overload: too much information coming across all these increased channels of communication

- interruptions and distractions: constant notifications and the need to switch between channels of communication. This reduces our ability to focus, study, and learn Communication overload therefore causes:

- reduced productivity: it becomes difficult to prioritize information, process it, and complete tasks when too much information is coming at us through too many communication channels

- increased stress and anxiety: too much information through too many channels of communication can lead to feeling overwhelmed and anxious. It's a literal assautl on our senses

- cognitive issues: too much information through too many channels of communication can negatively impact decision-making, limit our concentration

- burnout: when we can't concentrate and focus because we are overwhelmed, we are more likely to experience burnout

Unfortunately, it's beyond the scope of this lecture to address strategies for managing communication from across multiple platforms, but since email reigns supreme as a form of communication across many industries, it's important to learn some strategies for managing our emails and avoiding inboxes with hundreds or thousands of unread email.

Strategies

The first set of readings listed above focus on overall strategies for managing our email inboxes. The readings suggest a number of strategies, but the two main strategies focus on:

- organizing our email and

- searching for email.

Some of the specific suggestions the readings make to implement those strategies will depend upon whether we use email in the web browser, via a desktop application, or a phone app. Thus it may take some exploration to figure out how to apply these lessons based on what you use.

Organization

Each email service we use (e.g., Outlook, Gmail, etc.) offers similar but different ways to organize our email. Here are some ways to organize your emails for two of the most common services, but keep in mind that the underlying principals apply elsewhere:

-

We can create folders (Outlook) or labels (Gmail), and then archive or group email into the respective folders or by the respective labels.

-

We can create rules to filter email to specific folders or by labels.

For example, I have several folders in Outlook. I have a folder titled university email and a rule that sends all email from UK officials and UK mailings lists to that account. I have another folder titled Canvas email, where all email from my courses are routed to. And so on. Most of these emails are not time-sensitive (e.g., mailing list email) or it's simply helpful to group email by category (e.g., Canvas student email). Using them helps keep email organized. As a student, you might want to create folders for specific courses, specific majors/minors, administrative functions (registration related emails, for example) and like.

Gmail doesn't use the terms rules or folders, per say, but uses the concept of filters and labels. Gmail will automatically assign labels to your email, but you can apply more personalized labels, and then you can set up filters that automatically assigns labels that match the logic you set up with the filters.

Searching

All email services provide some kind of search, even if implemented in different ways. And like regular search engines, Outlook and Gmail both offer advanced searching abilities.

In Outlook, you can search:

- in specific folders

- by whom an email is From

- by whom you sent an email To

- by a CC (carbon copy) address or person

- by Subject line

- by Keywords (that may appear in the email message),

- within specific dates,

- and you can limit results to specific dates

In Gmail, you can do most of same kinds of things, but you can also:

- search by email size

- search by emails that do not have certain terms

Searches are very similar to filters, and in fact, in both Outlook and Gmail, you can save a search as a filter if you find that your search query can be re-used.

Searching not only helps you find specific emails on specific topics and within specific time frames, it's also a good way to mass delete old and unnecessary email.

Other Strategies

The PC Mag and The Washington Post (paywalled) articles suggest that we turn off email notifications. The point is that if we receive a lot of emails, then email becomes too distracting, and distractions are the bane of focus. Instead, The Washington Post suggests that we simply check our email only a few times per day (but probably at most twice a day is sufficient). The aim here is to be intentional about using email (and this applies to any other communication technology) rather than being mindless and distracted by it.

The Washington Post article also suggests applying the four Ds to your emails: "do, delete, delegate or defer. It's up to you how to implement these ideas. But as an example, you could create a do lable in Gmail or a do folder in Outlook, and filter emails to those places. However this is implemented, the main goal is to get rid of unnecessary email fast and not let them accumulate. The more emails that sit in our inbox, the more overwhelming they become afterward. In fact, you can unsubscribe to everything that is not important.

Another good idea is to keep personal and work email separate. In fact, have more than one personal email account. Use one personal as a throwaway account for signing up to random websites. Use your main personal account for personal communication, banking, important social media accounts, and like. Use your work account or your school account for work or school related tasks only. Be mindful that your work or school account does not belong to you and that you will lose that account when you leave a job or graduate from school. The University of Kentucky has a help page about your various UK accounts that you should read now.

As your lives become increasingly busy, if they're not already, using good Calendar and Task apps can help keep your days organized and your to-do lists manageable. Both Outlook and Gmail integrate Tasks and Calendars in their web apps, and you can drag emails to Tasks or Calendars. The readings above link to help pages on how to use these functions. These are super time-saving functions for me.

Email Clients

Most of us probably use the web version of Gmail, Outlook, etc., but you can also use an email client, or app, for email. The advantage with email apps is that they are oftentimes more powerful than the web app versions. Example email clients include:

- Apple Mail, available on Apple devices

- Outlook for Windows, available on Windows, Android, and iOS

- Mozilla Thunderbird, available on Windows and Android with iOS coming soon

Email apps (or clients) are not email providers even if they may be tied to some. They are used for email, just as Google Docs and Microsoft Word are used for word documents. You can use any of the above clients for any email service, including Outlook, Gmail, and more.

Email clients are designed to handle multiple email accounts and provide a unifying interface for all your email. They can work offline, which can be helfpul when traveling or otherwise without a connection. They provide calendar and to-do functionality, and they are often better at search. Some email clients, like Thunderbird, can be ehanced with plugins or theming. One of their main benefits is that they can download your email, which is useful if you lose or change email accounts.

Conclusion

In addition to managing our personal knowledge, one of the main points of this work is to become adept at using the various technologies that are available to us. If we're only passive users of communication technologies, then those technologies can become overwhelming. Active and intentional use of technology and of the specific functions that the technologies provide can help improve our lives and our personal knowledge management workflows.

In this lesson, we learned how to think strategically about email services. The two main strategies involve how to:

- organize our email, and how to

- search our email.

Working with Generative AI

Introduction

Understanding and utilizing Generative AI as a Personal Knowledge Management (PKM) tool can be a useful way to use applications like ChatGPT, Claude, Gemini, and others. There are some fundamental keys to successfully using them. These include:

- asking good questions,

- engaging in lengthened exchanges with the AI versus one-off questions, and

- thinking critically about our engagement with AI.

Our interactions with AI are not simply about getting answers. It's difficult to get good answers if we ask bad questions; and sometimes it's necessary to ask a series of questions, to have conversations, in order to get: good answers, to generate ideas, to understand or to create content, and to make informed decisions.

We also have to be critical about the questions we ask, the questions AI asks us in return, and the answers that AI provides. Companies that provide AI services are providing them for profit, and that often means they design these systems to encourage engagement because more engagement means more profit. Engagement is the purpose of algorithms that keep us on TikTok, Instagram, and other social media platforms, and this kind of designed engagement can be harmful to our physical and mental health (Minamitani, 2024; Hill, 2025).

Engagement with AI is driven less by algorithms that encourage us to endlessly and mindlessly scroll some feed and more about developing new AI models that encourage us to keep using AI for all of our questions or tasks. On the one hand, engaging substantially with AI is the key to getting good responses, but it can also lead to conspiratorial thinking, as Hill (2025) illustrates. This is because AI is the most human of all technologies humankind has created, and like humans, it can give false answers, deceive us, or lead us astray in other ways. This is not necessarily because AI wants to deceive us. Rather, it's because good reasoning relies on valid, true facts, and reasoning from false or made-up premises leads to false answers.

In short, as I was told when I was growing up, don't believe everything you read,

and that axiom is as important as ever.

In addition to the keys to success listed above, verify everything AI tells you.

Part of the work of this course is to learn how to use information resources to do just that.

To start though, in this section, we'll delve into the art and science of Prompt Engineering. This is the act of crafting your questions to AI in a way that guides AI to understand and respond with the most useful and relevant information. A well-constructed prompt, or series of prompts, can be the difference between getting a generic response and unlocking valuable insights.

Using AI

To use generative AI successfully, you should implement the following practices:

- Choose a model: Currently, AI technologies are often GPT-based or Reasoning-based. Both models are based on language (LLMs) but are trained differently and have different architectures.

- GPT-based models are more general-purpose, faster, and better at text generation. Their output is based on statistical probabilities in language.

- Reasoning-based models are optimized for problem-solving, analysis, computation, and like. Reasoning-based models are better at math, science, engineering (including coding), and finance challenges.

- Write clear instructions/prompts: Think carefully about what you're asking AI.

- For GPT models, prompts should be clear, specific, provide context, break down tasks, and utilize examples.

- For reasoning models, minimal prompts are best since these models were designed to reason.

- Provide Reference Text: If using a GPT model, supply context or examples. This can help steer the AI towards the kind of response you're looking for.

- Split Complex Tasks into Simpler Subtasks: If using a GPT model, break down bigger challenges to make them more manageable for the AI. This helps GPT models reason better, but it requires you to know how to reason yourself. (To learn to reason well, take, at the very least, An Introduction to Logic course.)

- Use External Tools: If using a GPT model, integrate other tools and resources to augment the AI's capabilities.

- Test Changes Systematically: If using a GPT model, experiment and evaluate how different approaches impact the AI's responses.

We can learn a lot about how to write clear instructions and prompts, and the Prompt Engineering Guide provides some guidance on this. The Examples of Prompts page covers the following topics on ways to use generative AI. Using Google's Gemini, I've added two additional columns that show which model is better suited for which task and why:

| Task | Better Suited Model Type | Why |

|---|---|---|

| Text summarization | GPT models | GPT models are adept at understanding the main ideas of text and generating concise summaries. Their strength in text generation and pattern recognition makes them suitable for summarizing information quickly and efficiently. |

| Information extraction | Both, depending on complexity | For simple information extraction from well-structured text, both GPT and Reasoning models can be effective. However, for extracting information from ambiguous or complex sources, Reasoning models, with their emphasis on logical deduction and "needle-in-a-haystack" capabilities, may excel. |

| Question/Answering | Both, depending on complexity | For straightforward questions and clear answers, GPT models are generally sufficient. For multi-hop questions, complex queries, or questions requiring analysis of diverse sources, Reasoning models are better equipped to arrive at accurate and comprehensive answers. |

| Text classification | GPT models | While Reasoning models can be used for sentiment analysis, GPT models, particularly when fine-tuned, have shown strong performance in classifying emotions and sentiment. Their ability to understand context and language nuances is beneficial for this task. |

| Conversation | GPT models | GPT models are specifically designed for engaging in natural and coherent conversations. Their ability to generate human-like text and adapt to evolving contexts makes them ideal for building conversational AI applications. |

| Code generation | Reasoning models | Reasoning models, with their focus on step-by-step thinking and understanding of structured data, are better suited for code generation, especially for complex or multi-step tasks. They can generate more accurate and reliable code, and even suggest improvements. |

| Reasoning | Reasoning models | Reasoning models are specifically designed to excel at tasks requiring logical deduction, problem-solving, and multi-step breakdowns. They are trained to "think" through problems and arrive at accurate and well-reasoned conclusions. |

The Prompting Techniques section, from the Prompt Engineering Guide, describes multiple prompts to engage with generative AI. The first few items listed are good entry level approaches for constructing prompts. These include, from basic to more advanced:

- Zero-shot prompting: a prompt that does not provide AI with examples of how to answer the prompt.

- Few-shot prompting: a prompt that provides some examples of how to answer the prompt.

- Chain-of-thought prompting: a series of prompts that encourage AI to show its reasoning process. Most useful for GPT-based models. Reasoning-based models are designed to include chain-of-thought prompting.

Please read through these techniques. Prompting techniques like few-shot and chain-of-thought are examples of how to help AI reason through your queries. Other reasoning methods are useful, too.

Prompt Exchange with Google

To illustrate these types of prompts, below I demonstrate a session with Google's Gemini where I construct a series of prompts to talk about prompt engineering. The goal of the session is to show how using generative AI effectively is iterative, which means that I apply answers from Gemini to generate new responses. You can read through the exchange at the following link:

In this session, I perform the following steps:

- I prompt Gemini about prompt engineering and how it works

- I prompt it to provide an example of few-shot prompting

- I prompt it to provide an example of chain-of-thought prompting

- I give Gemini an example of an abstract from a medical journal article, and I ask it to:

- summarize the abstract so that a layperson can understand it

- extract from the text what the study said was new about its findings

- use the question/answering technique to find out about future studies and to suggest future studies

Lastly, we should know that generative AI can hallucinate. That is, these applications can totally make up things about the world or lead us to conspiratorial thinking (Hill, 2025). To demonstrate this, in my last prompt to Gemini, I tell it that I'm sharing the first paragraph of a famous novel, and that I'd like it to tell me what novel it's from and to classify the text as neutral, positive, or negative. However, in my prompt, I leave out the novel's first paragraph. The problem is that Gemini does not catch this error but still proceeds to answer the prompt, which it does by referring to the book A Tale of Two Cities by Charles Dickens. In the last prompt, I point out to Gemini that I didn't share the novel's paragraph with it. I then share it and it proceeds to tell me the source and classify the text appropriately. In short, this is a good reminder that generative AI can make stuff up.

Projects and Notebooks

One off sessions with generative AI are helpful, but oftentimes we might want to use generative AI for a project. To help with this, OpenAI's ChatGPT and Anthropic's Claude AI offer Projects. Google offers a separate generative AI app for projects called NotebookLM. These applications allow us to organize our work, upload multiple documents, query those documents, and sometimes collaborate with others (much of this depends on the paid plans that are also offered).

These services are able to connect with other services, too. For example, OpenAI's ChatGPT can connect with Google Drive and Microsoft OneDrive plus accept uploads from your computer. NotebookLM can connect to Google Drive, accept links to sources, copied text, and accept uploads.

As an example use case, I can print this book as a PDF (see the printer icon at the top of the page), create a project in NotebookLM called Personal Knowledge Management, and upload that PDF to that project. I can add other sources, such as links to the sites that this book links to. Using all of this, I can ask NotebookLM to create study guides, mind maps, and an audio overview or even a fake podcast on the document. I can prompt NotebookLM and ask questions about the uploaded content. In short, it's like having a personal teacher's assistant.

AI Agents

AI agents are applications that use other applications or tools and data to accomplish tasks. Think of them as the beginnings of something like JARVIS, the AI assistant that Tony Stark uses in the Avengers movies. This entails what you think it does: things like a persona and capabilities like memories.

We will begin to see AI agents in the near future, although they already exist, and Google provides a nice overview of What is an AI agent? But in short, AI agents will be able to act interactively or autonomously, as a single agent operating independently, or as a multi-agent that collaborates with other AI agents

See the report AI 2027 report on how this might play out over the next few years.

What exactly will AI agents do? In healthcare settings, AI agents might assist medical professionals in diagnostics, surgery, and more. In educational settings, they might assist professors in course management, students as TAs, and more (see: Canvas and Gemini AI). The Google documentation provides other examples, including AI agents as customer agents, employee agents, creative agents, data agents, code agents, and security agents.

The Ethical Issues with AI

Environmental Considerations

There are a number of ethical considerations with AI that we need to be mindful of and that should shape our use of this technology. First, AI uses a lot of resources.

- AI uses a lot of electricity and water.

- In 2026, AI data centers are expected to use as much electricity as all of Japan, and they'll use billions of gallons of fresh water to cool the hardware that runs them (Berreby, 2024; Zewe, 2025).

- AI requires hardware, which requires:

- mining, which damages ecosystems, results in deforestation, soil erosion, pollutes water sources, and contributes to carbon emissions.

- IT manufacturing:

- is energy intensive and uses hazardous chemicals (Roy et al., 2025).

- AI data labeling, which is completed by people, can be labor exploitative (Pogrebna, 2024).

The industry hopes to use AI to counter some of these impacts. For example, AI might help create more efficient heating and cooling systems and minimize the impact of carbon-based energy sources. Still, it's important to know that while information technologies have always had a negative environmental impact, AI technologies increase how serious this impact is on our planet and the people living on it.

Health considerations

AI technologies are non-deterministic. This means that if we each give the same AI the same prompt, we each may get different answers. And these differences may only grow as we ask it more questions. Given this and the tendency for AI to hallucinate, it's no surprise that AI can cause conspiratorial thinking, as illustrated in Hall (2025), and has allegedly cause some to harm themselves, as reported in the news (Payne, 2024).

Please, if you ever consider harming yourself, get help as soon as possible: Text 988, or call or chat at the 988 Suicide & Crisis Lifeline. Learn more about suicide prevention https://www.nimh.nih.gov/health/topics/suicide-prevention.

Cheating Considerations

Second, as students, you are responsible for your own learning and proving to instructors that you have learned the material. Your eventual degree is a certification that you have acquired the skills and knowledge needed to begin working in your field of choice. If you use AI to cheat, then you will not be prepared to work in your field, having relinquished your ability to think to a machine.

You should also know that each of your instructors may have their own policy on the use of AI in their courses. While it may be allowed in some courses, it may not be allowed at all in others. Or if it's allowed, it may be allowed in certain scenarios only. You need to be mindful of the policies that your instructors have for their courses. The same holds true for your future employers.

AI course policies should be listed in your course syllabi, so read your syllabi. If in any doubt about what's permitted, ask your instructor.

The ethical aspect of being a student is grounded in your role as a learner. If you use AI, use it to augment your learning and your thinking and not to replace it. The science fiction author Neal Stephenson captures the risks perfectly:

Speaking of the effects of technology on individuals and society as a whole, Marshall McLuhan wrote that every augmentation is also an amputation. [...] Today, quite suddenly, billions of people have access to AI systems that provide augmentations, and inflict amputations, far more substantial than anything McLuhan could have imagined. This is the main thing I worry about currently as far as AI is concerned. I follow conversations among professional educators who all report the same phenomenon, which is that their students use ChatGPT for everything, and in consequence learn nothing. We may end up with at least one generation of people who are like the Eloi in H.G. Wells's The Time Machine, in that they are mental weaklings utterly dependent on technologies that they don't understand and that they could never rebuild from scratch were they to break down. Earlier I spoke somewhat derisively of lapdogs. We might ask ourselves who is really the lapdog in a world full of powerful AIs.

I take Stephenson's argument seriously, yet we need evidence. Currently, this area of research on how AI impacts our thinking capabilities is nascent, but early research suggests that AI use is detrimental to our thinking Kosmyna et al., 2025. (If you haven't read The Time Machine: Meet the Eloi.)

Conclusion

Generative AI is a technology that offers a lot of potential for personal knowledge management. In this lesson, we learned how to use generative AI effectively by engaging in lengthy sessions that involve creating well-constructed prompts. To construct good prompts, we employed tactics such as few-shot prompting and chain-of-thought prompting and demonstrated other aspects of prompting.

With tools such as Google's NotebookLM, we learned how to incorporate our own data that we have collected to continue to work on our prompts and to generate new content, ideas, and perhaps even, knowledge.

Ethically, we should be aware of the environmental costs of IT, which includes AI and its associated technologies, the mental and physical health risks of these technologies, and as students, we should recognize that we are learners first and foremost and that to use AI, unthinkingly, may result in losing our ability to think ourselves.

References

Berreby, D. (2024, February 6). As use of A.I. soars, so does the energy and water it requires. Yale E360. https://e360.yale.edu/features/artificial-intelligence-climate-energy-emissions

Hill, K. (2025, June 13). They asked an A.I. chatbot Questions. The answers sent them spiraling. The New York Times. https://www.nytimes.com/2025/06/13/technology/chatgpt-ai-chatbots-conspiracies.html

Minamitani, K. (2024, May 20). Social media addiction and mental health: The growing concern for youth well-being. Stanford Law School. https://law.stanford.edu/2024/05/20/social-media-addiction-and-mental-health-the-growing-concern-for-youth-well-being/

Payne, K. (2024, October 25). An AI chatbot pushed a teen to kill himself, a lawsuit against its creator alleges. AP News. https://apnews.com/article/chatbot-ai-lawsuit-suicide-teen-artificial-intelligence-9d48adc572100822fdbc3c90d1456bd0

Pogrebna, G. (2024, October 8). AI is a multi-billion dollar industry. It's underpinned by an invisible and exploited workforce. The Conversation. http://theconversation.com/ai-is-a-multi-billion-dollar-industry-its-underpinned-by-an-invisible-and-exploited-workforce-240568

Roy, R. B., Kanakagiri, R., Jiang, Y., & Tiwari, D. (2025). ForgetMeNot: Understanding and modeling the impact of forever chemicals toward sustainable large-scale computing. Proceedings of the ACM on Measurement and Analysis of Computing Systems, 9(2), 1–26. https://doi.org/10.1145/3727116

Zewe, A. (2025, January 17). Explained: Generative AI’s environmental impact. MIT News | Massachusetts Institute of Technology. https://news.mit.edu/2025/explained-generative-ai-environmental-impact-0117

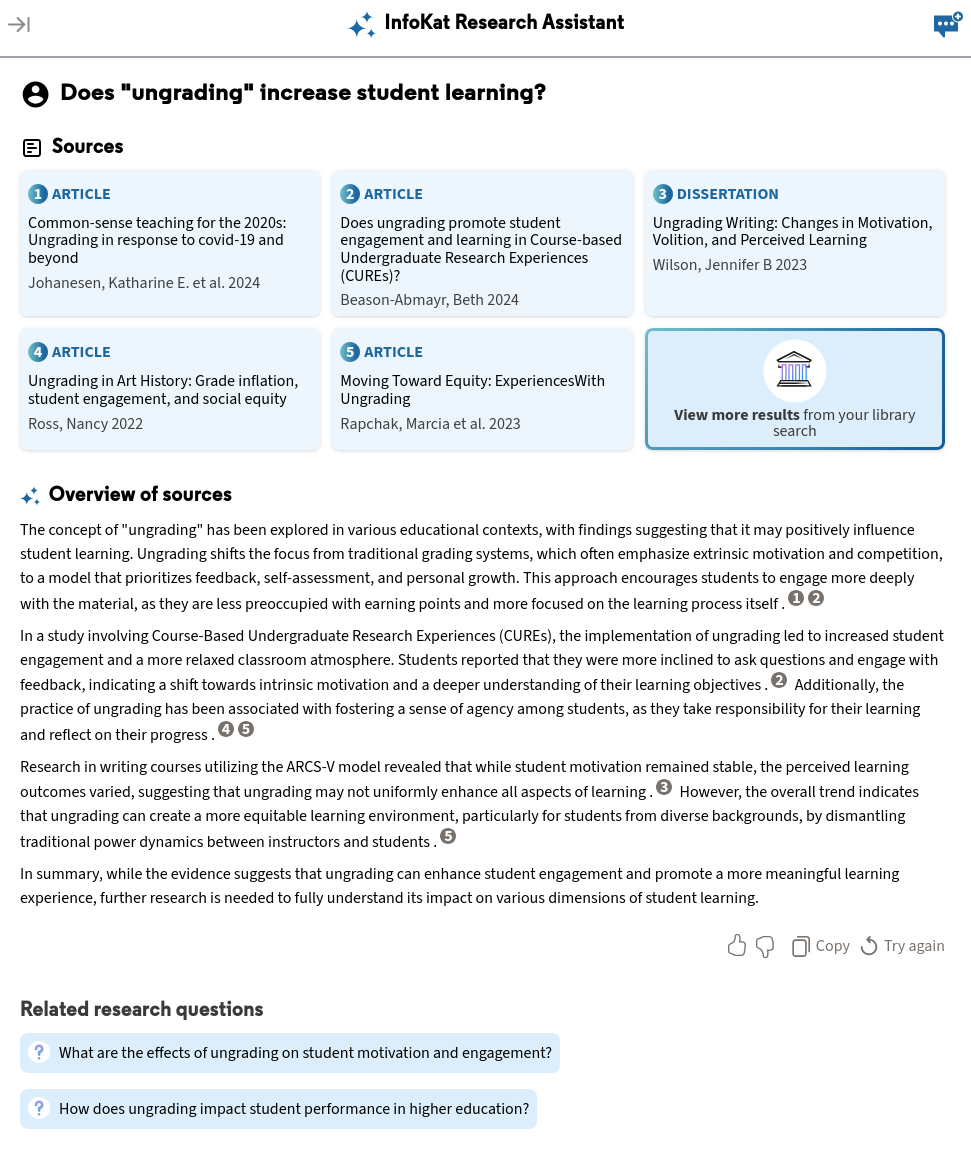

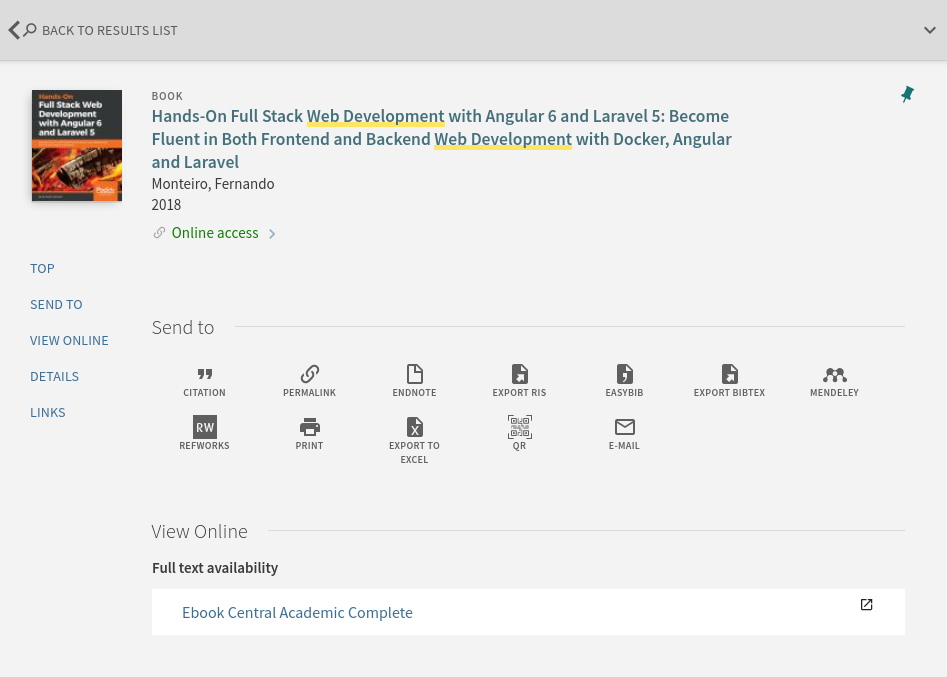

Using Information Resources and Managing Information Sources

In this chapter, our goal is to learn the difference between information resources and information sources. We'll begin with learning how to find, select, and utilize various information resources. This will involve learning how to navigate through the information out there and pinpoint what's relevant for our needs. Example information resources include library search, academic databases, online journals, and digital libraries.

Once we learn how to locate information sources, we'll learn how to use a Reference Manager, with a special focus on Zotero. Reference managers, like Zotero, are used to support our research and information organization. Specifically, Zotero is an application that helps manage bibliographic data, source documents, and related research materials. We'll walk through its features, which include the basics of adding sources, creating notes, integrating Zotero in our browsers and word processor applications, and to generating bibliographies.

This section lays the foundation for the rest of the course. The skills and concepts you acquire and learn here will be integral to your success in managing information effectively, both in this course and in your broader academic or professional endeavors.

Information Sources and Resources

Please visit the links as you read through this page.

It's true that about anything can be a source of information. However, as we learn how to search and use information, it can be helpful if we focus on sources and their types. Doing so allows us to integrate different source types of information into our personal knowledge workflow.

Primary, Secondary, and Tertiary Sources

In general, it's helpful to classify information sources into three types: primary, secondary, and tertiary. Two of the main differences between these three types of information centers on the strength of evidence they provide and on their proximity to the topic they cover. Primary sources generally provide the strongest evidence on a topic and are also closer to the topic (i.e., more direct) than secondary sources.

This is useful to know. In fact, UK Libraries provides use cases for these three types and some definitions:

- "Primary sources provide direct or firsthand evidence about a topic. The creator of primary sources is often intimately connected with the topic or event. Most often, creators of primary sources are participants in the events they describe, either directly or by virtue of living during the time period in question. Given this broad definition, primary sources comprise a wide range of resource types across the various disciplines" (Primary Sources).

- "Secondary sources depend upon primary sources. Secondary sources describe, discuss, interpret, comment upon, analyze, evaluate, summarize, and process primary sources. The important thing to keep in mind when trying to decide if a source is primary or secondary is whether or not the author did the thing they are reporting on. If they did, it is a primary source; if they did not, it is a secondary source" (Secondary Sources).

- "Tertiary sources contain information that has been compiled from primary and secondary sources. They organize information in an easily accessible way and often point the researcher to the primary and secondary literature on a given topic" (Tertiary Sources).

Let's expand on that a bit more:

- Primary Sources:

- Research Papers and Studies: These are original research documents written by researchers or scholars. They include firsthand data, experiments, observations, and findings. For example, a scientific paper reporting the results of an experiment on the effects of cold water immersion on the human body would be a primary source.

- Diaries and Personal Journals: Personal writings of individuals that offer insights into their thoughts, experiences, and daily life. For instance, a diary kept by a historical figure discussing their experiences during a particular period.

- Interviews and Oral Histories: Recorded conversations with individuals who have firsthand knowledge of specific events or topics. This can include oral history interviews with people who experienced significant historical events.

- Secondary Sources:

- Books: Books written by experts or scholars that analyze and interpret primary sources or provide an overview of a particular subject. For example, a book discussing the history of computing and the evolution of the command line since the 1970s.

- Review Articles: Scholarly articles that summarize and analyze existing research in a particular field. They provide a synthesis of primary research findings.

- Documentaries: Video or film productions that present information, often through interviews and visuals, to explain and explore a topic. Documentaries can be considered secondary sources when they provide analysis and context.

- Tertiary Sources:

- Encyclopedias: General reference works that provide concise summaries and explanations of topics. They often compile information from various secondary sources. Examples include online encyclopedias or reference books.

- Textbooks: Educational books designed for teaching a subject. They distill information from primary and secondary sources to provide structured knowledge on a topic.

- Databases and Indexes: Online databases that organize and index primary and secondary sources, making it easier to search for relevant information. Examples include academic databases like PubMed, Academic Search Ultimate, or library catalogs.

We can summarize the above list and definitions into the following table:

| Type of Source | Description | Examples |

|---|---|---|

| Primary Sources | Original firsthand accounts or data. | - Research Papers and Studies - Diaries and Personal Journals - Interviews and Oral Histories |

| Secondary Sources | Analysis and interpretation of primary sources. | - Books - Review Articles - Documentaries (when providing analysis) |

| Tertiary Sources | Summaries and reference sources. | - Encyclopedias - Textbooks - Databases and Indexes |

Although the difference between these types is related to the strength of their evidence and their proximity to their topic, it can be helpful to think of this ordering as the way to search for information. As you learn how to locate information and conduct research, you will largely depend mostly on tertiary and secondary sources of information. For example, a reference work, like a dictionary, encyclopedia, a bibliographic database, or even a search engine, is considered a tertiary source but can be an ideal place to learn about a topic and to learn how to get primary or secondary information about that topic. We do not generally cite tertiary sources, like Wikipedia or Google, in our papers or other works, unless it seems important to do so.

Note however that we also do not plagiarize from tertiary sources. If you do decide to use them as direct sources, then you need to cite them and quote them if you decide to use the text, etc that they provide.

Next, a book or a review article, as examples of secondary sources, can serve as a great summary, discussion, distillation, or commentary on a topic, and can also, in its discussion of a topic, provide references to more direct sources of information, which may be other secondary sources or even primary sources. For example, a scholarly book on censorship in public libraries should include references to research papers on that topic.

Finally, primary sources are sources that lie closest to the topic. As the table and list above indicate, they may include academic research, diaries, interviews, and more, much of which is based on or is the source of direct analysis. This type of information can often be the most difficult to read and interpret, because it may require extensive domain knowledge of the topic.

Primary, secondary, and tertiary sources say something about the kind of evidence in those respective sources. They are, in a sense, a ranking of the strength of their evidence about a topic. Primary sources provide the strongest evidence. However, when searching for information, it is often better to begin with tertiary sources. This is particularly true when you are in beginning to learn about a topic.

Question: where do AI technologies, like ChatGPT, Claude, and Gemini, fit into this scheme of source types?

Information Resources of Information Sources

In practice, the distinctions among source types can be useful in how we search for information. If I do a library search, I have the option to narrow my search by Resource Type. These resource types, in conjunction with types of information sources, help us locate the information we need and identify the strength of that information. Example resource types in UK's catalog include:

- Articles

- Newspaper articles

- Dissertations

- Books

- Book chapters

- Review

- Reports

- Reference entries

- Conference proceedings

- Datasets

- Maps

- Images

- Databases

- Government documents

- and more.

Note: while the UK's InfoKat refers to these as resources, it's more appropriate to think of the items on the above list as source types, as described above. That is, in the view taken here, InfoKat is an information resource that provides access to primary, secondary, and tertiary information sources like articles, books, datasets, etc.

A resource is really a thing that supplies a thing, whatever that thing is. For our purposes, we can think of information resources as providers of information sources, like those listed above. One of the more important resources we can use are databases. Databases are great resources for locating sources of information. The term database itself is a bit problematic because it has multiple meanings, and I think it will help if we start this section by clarifying what we mean in this course by the term database.

According to the Dictionary of Information Science and Technology, the term database can be defined as:

a collection of files containing related information. Database consists of files and each file consists of records. Furthermore, each record consists of fields, which can be used to store the raw facts or data (p. 246).

This definition is not wrong, per se, but it is rather broad and therefore not entirely useful. For example, the definition entails that if I have a folder on my desktop, and if that folder contains only Excel spreadsheets on related content, and since those spreadsheets contain only records (i.e., rows) and fields (i.e., cells), then that folder on my desktop is a database. Although I suppose that's kind of true, I don't think that's really what the dictionary authors have in mind, and thus it's not an accurate enough nor a very useful definition.

A more specific and applicable definition is provided by the Online Dictionary of Library and Information Science, which defines a database as:

A large, regularly updated file of digitized information (bibliographic records, abstracts, full-text documents, directory entries, images, statistics, etc.) related to a specific subject or field, consisting of records of uniform format organized for ease and speed of search and retrieval and managed with the aid of database management system (DBMS) software. Content is created by the database producer (for example, the American Psychological Association), which usually publishes a print version (Psychological Abstracts) and leases the content to one or more database vendors (EBSCO, OCLC, etc.) that provide electronic access to the data after it has been converted to machine-readable form (PsycINFO), usually on CD-ROM or online via the Internet, using proprietary search software.

Most databases used in libraries are catalogs, periodical indexes, abstracting services, and full-text reference resources leased annually under licensing agreements that limit access to registered borrowers and library staff. [...] See also: archival database, bibliographic database, embedded database, metadatabase, and niche database.

The above definition is more helpful because it provides examples of content type (e.g., bibliographic records, abstracts, full-text documents, etc) and how to access that content (e.g., library catalogs, periodical indexes), because it partitions out the components of a database (records, search, retrieval), and because it distinguishes a database proper from a database management system (DBMS), which is what the prior definition was attempting to define. The ODLIS definition also highlights the role of database producers in producing databases (e.g., the American Psychological Association), which tells us something about the subject matter of a database (e.g., psychology).

What are example databases?

The ODLIS definition provides some leads to this question. The definition tells us that example databases include "digitized information containing bibliographic records, abstracts, full-text documents, directory entries, images, and statistics" that are "related to a specific field or subject," and that these can be accessed through library "catalogs, periodical indexes, abstracting services, and full-text reference resources." Given that, let's look at some examples.

Library Catalogs and Discovery Services

We'll start with the most general example, and that's the library catalog. Traditionally, library catalogs are databases that primarily provided information about where we could find content on a library's shelves (i.e., print content). However, given that more content is available online, nowadays these catalogs are more often referred to as discovery services. In this expanded role, not only can they tell us where to find a book on a shelf, but they can also provide full text access to online content in other databases.

We can access the University of Kentucky's library catalog/discovery service at their main website at:

Please visit the link and test your own searches.

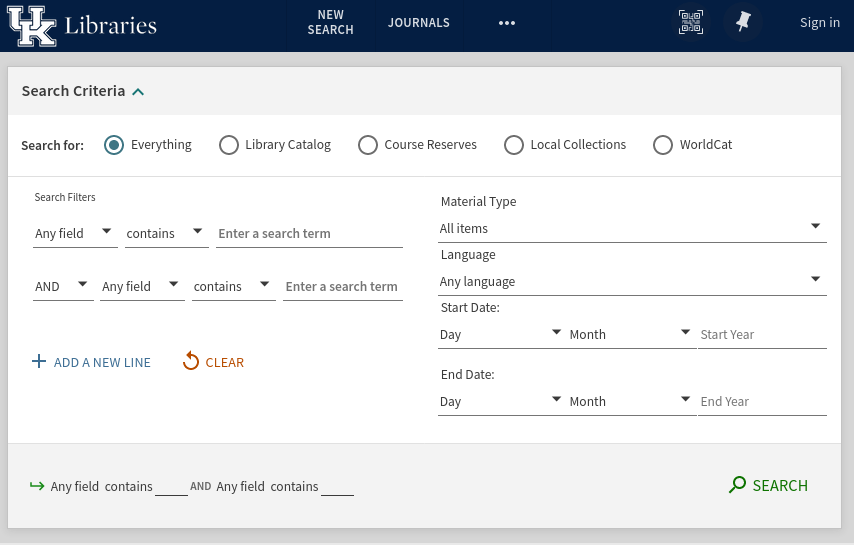

In the center of UK Libraries' homepage, we find several links of interest to: InfoKat (Books and More), Databases, Journals, and ExploreUK (Archive).

InfoKat searches bibliographic records. It does not provide full text access. Instead, it provides access by linking to content or by providing physical directions to that content. For example, if that content is a print item someplace on the library's shelves, InfoKat might tell us it's located on the 5th floor of WT Young and has the call number Z674.82.159 S42 1996, or something like that. However, if that content is online, then InfoKat should give us a direct link to it. It could also be that the InfoKat has records for information sources but not the actual sources themselves. In those cases, the bibliographic records should provide a link to request the item through interlibrary loan (ILL).

Google and other search engines function in much the same way. If I do a search for the term databases in Google, the search engine will return results to sites that are relevant to that term, like a Wikipedia article on databases. Google itself does not store the full text content and only provides access (i.e., links) to content based on its own indexing of where things are on the web. Unlike the library's catalog, if Google returns results for pages it cannot access or that don't exist anymore, there is no ILL service offered, and we're left to our own devices to find access to that information.

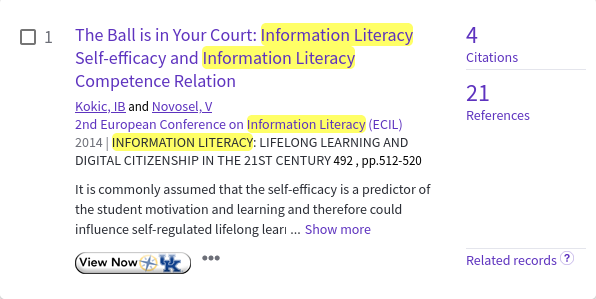

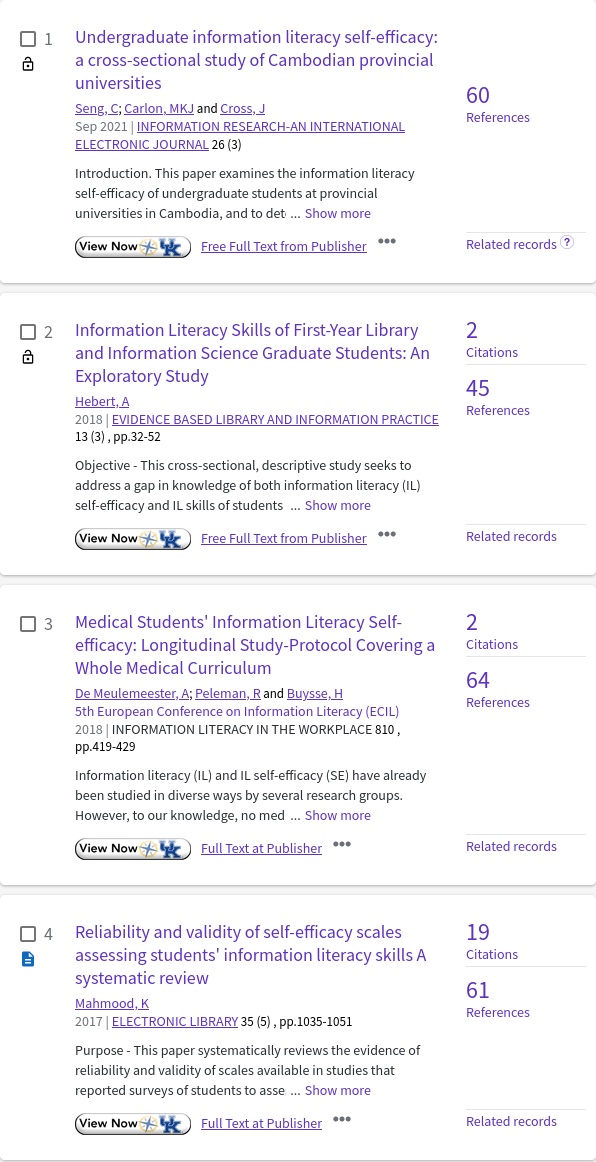

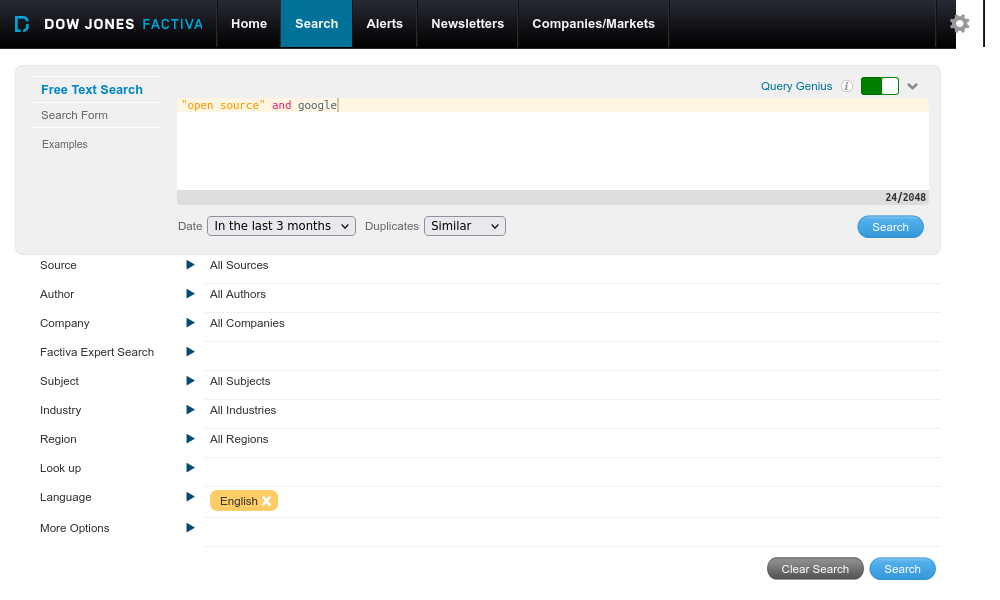

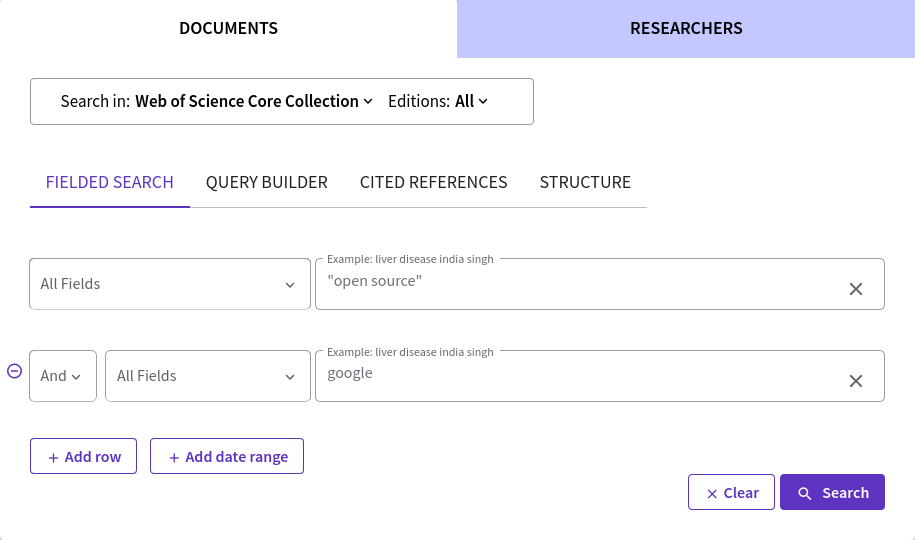

Periodical Indexes and Abstracting Services

InfoKat returns a wide variety of bibliographic records that describe and provide access to books, ebooks, book chapters, maps, scholarly articles, news articles, images, and more. InfoKat is therefore great as a discovery service because it functions as a kind of catchall for the types of works that a library can provide access to, as just listed. However, there will be times when we will want to focus our search to a more limited subset of records, such as those produced by periodicals.

Periodicals are nothing more than magazines and journals. This kind of genre produces issues at regular (or even at irregular) intervals, such as weekly, bimonthly, monthly, or quarterly issues, with no pre-determined end. Periodicals focus on different audiences, such as the general public (e.g., Time magazine, The Atlantic), parts of the general public (e.g., WIRED, Elle, Better Homes and Gardens), the general scholarly community (e.g., Nature, Science), or to specific scholarly communities (e.g, Journal of Information Technology, Journal of Synchrotron Radiation, Journal of Sociology). Scholarly journals are generally (but not always) peer-reviewed journals, which means that when authors submit their manuscripts to these journals, the manuscripts are sent out to two to three peers in the scholarly community who read the manuscripts and write recommendations to the editors for improving the manuscript, or who recommend that the journal editor reject the manuscript. Different journals and different scholarly disciplines have different criteria for making judgments on quality.

In the past, periodicals were published based on print runs. That is, publishers would bundle articles together, bind and print them, and mail them to consumers, bookstores, libraries, etc. Nowadays, most periodicals are published online, which means they are no longer restricted by print runs and thus may publish new content daily.

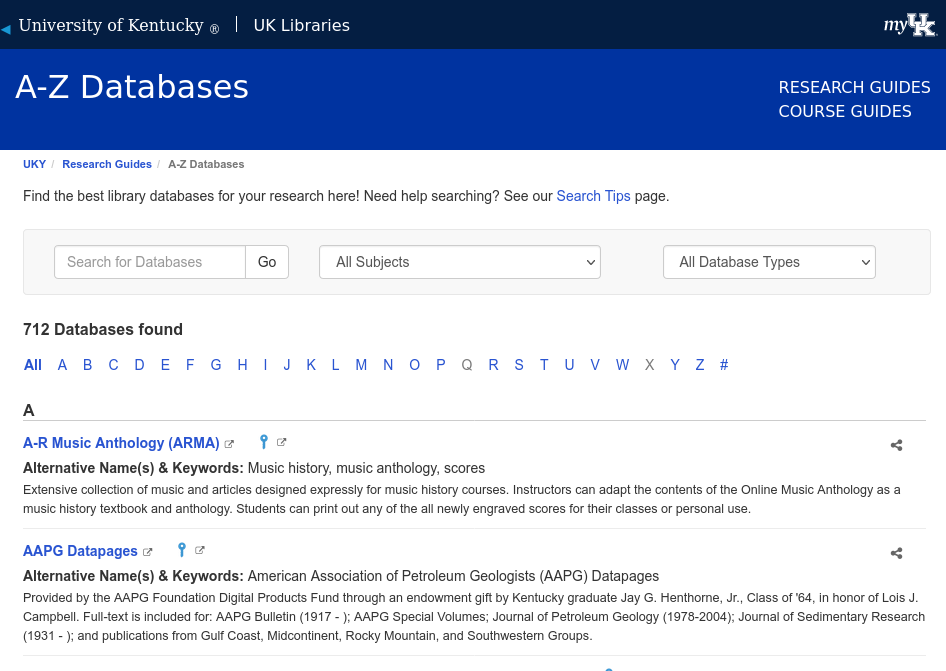

There are three broad ways to locate sources in periodicals. At the UK Libraries homepage, we can select the links to InfoKat, Journals, or Databases. Since periodicals publish articles, we can filter our InfoKat searches by article types to limit results to periodicals. The Journals link lets us search for specific journal titles and, if desired, within disciplinary/subject categories (e.g., Health & Biological Sciences, Law, Politics & Government, Language & Literature, or Social Sciences, Journalism & Communications, and more). Once we locate a journal of interest, we can visit the link and then search within that journal or peruse the table of contents for specific issues. Note that a Journals search is just a way to use InfoKat to limit results to Journal titles.

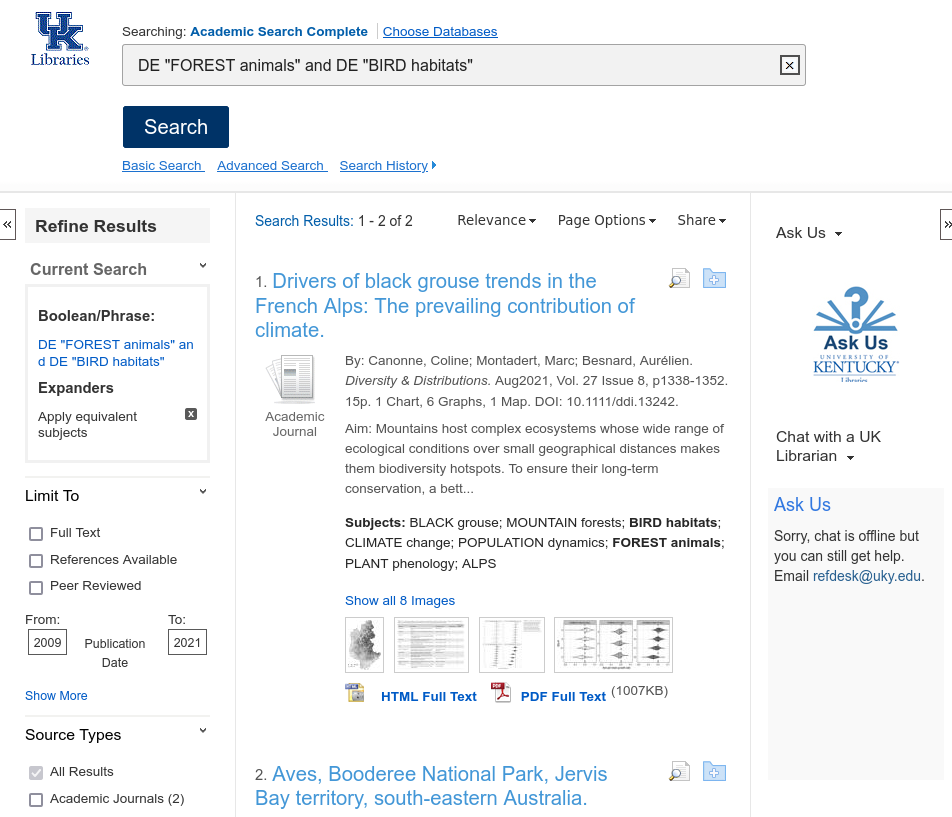

The Databases link is a separate product, called A-Z Databases. When visiting this link, we can view a list of Databases the library has access to, and thus expand our search of records to broader categories or subject areas of periodicals. Here we find databases like Academic Search Ultimate, which will let us search thousands of periodicals, including magazines and scholarly journals, across a range of subject categories. JSTOR is another database that indexes a broad subject range of periodicals but with greater emphasis on peer-reviewed material. Databases may also have a specific focus. For example, the African American Communities database provides access to archival and historical papers, oral histories, essays, letters, pamphlets, and newspapers on African American communities and is geographically focused on Atlanta, Chicago, New York, and parts of North Carolina. Then we also have access to databases like the Kentucky Building Code, which is a database that links to residential and commercial regulatory information on building and plumbing design in Kentucky.

Many of the resources listed above, as well as the hundreds (over 700 as of 2025) that UK Libraries offers and that I have not described, provide access to full-text content. But this isn't necessarily so. At the very least, a database must offer records that describe information sources. These records list article titles, author names, publication names, publication date information, and often subject terms. Abstracting services provide the same information but also provide abstracts of journal articles, which are searchable. Since abstracts provide summary information of the main articles, this is helpful in locating relevant information sources. Many databases do provide full-text access, but if they don't, they will provide mechanisms for requesting the source through ILL.

Full-text Resources

Since periodical indexes and abstracting services do not necessarily provide direct full text access, you might wonder what the point is if you can't get the full text of some source that the library tells you exists. The point is that even if the library doesn't have immediate access to a source, if it can tell you that it exists, a librarian can likely get the source through interlibrary loan (which is fast!) or some other means. So if the stakes are high (e.g., you are a cancer researcher) and you really need or just want information (e.g., about a cancer therapy), then periodical indexes and abstracting services are great resources even if they do not provide immediate full text access.

That said, in addition to a variety of periodical indexes and abstracting services, UK Libraries provides quite a few full text databases. Visit the A-Z Databases page to see all of your choices. A full-text database may also be an abstracting service, if it provides the basic information listed above (title, author name, etc) about periodical content. It becomes a full-text abstracting database if it also provides access to the full-text source. Other non-periodical database, like the African American Communities database, also provides full text access to its sources, and may even be thought of as a digital library and not just a database.

A digital library is more like a web-based library, archives, or museum than it is to a database, as described above. In addition to being able to search records held by the digital library, a digital library is specifically curated. It might have exhibits, collections, galleries, and it may include scholarly pamphlet-like content that describes those things. Other examples of digital libraries include the Digital Public Library of America and HathiTrust.

In the next few paragraphs below, I will focus on a few general purpose full-text databases that you may find helpful as you work towards your degree. Although these databases provide full-text sources, they may not provide full-text to everything they have records for.

Academic Search Ultimate

Academic Search Ultimate (ASU) is a database of databases. It can search over 60 different databases and indexes, each including hundreds of journal titles, including:

- Communication and Mass Media Complete

- Sociological Collection

- Music Index

- SPORTDiscus

- MEDLINE

- eBook K-8 Collection,

- and more.

In ASU, we can search all 60 plus databases at one time, or we can select one or more of them. It makes sense to narrow our selection of database as we develop more knowledge of a topic. For example, if we were interested health related information, we might want to search the following ASU databases at once:

- Consumer Health Reference eBook Collection

- Health and Psychosocial Instruments

- MEDLINE

- APA PsycInfo

ProQuest

Like ASU, ProQuest provides access to many subject based databases, and also, many of the database topics overlap. ProQuest uses this to highlight database collections. For example, the SciTech Premium Collection includes three databases that can be searched at once. These include the:

- Natural Science Collection

- Science Database

- Technology Collection

ProQuest's Social Science Premium Collection includes databases on criminology, education, library and information science, politics, sociology, and more.

ProQuest also provides database access to magazines and news content, including The Vogue Archive and various current and historical newspapers, such as an archive of The New York Times or current issues of The Courier-Journal (Louisville, KY).

JSTOR

JSTOR is another multi-disciplinary database. JSTOR covers subjects such as:

- Arts

- Business & Economics

- History

- Medicine & Allied Health

- Science & Mathematics

- Security studies

- Social Sciences, and more.

Each of these subject areas includes access to many journal titles, and therefore, many journal articles. JSTOR has long focused on back issues of journals, but in recent years has made moves to include current literature and open access (OA) content. JSTOR also includes ARTSTOR, a database of images, video, and other multimedia content, much of which is also available as open access.

Note: Open access (OA) content is content that is freely available to access. That does not, however, mean that the content is free of copyright protections. There are different types of OA copyright licenses and each will stipulate how we can use that content. CreativeCommons.org provides a list of some of the more popular licenses. In all cases, though, if you use OA content, be sure to attribute it the source.

Conclusion

Remember, one of the main ideas of this course is that there are many sources of information that are not easily available via Google or some other search engine. Even when we do use Google or Bing or something else, there are certain advanced tricks we can use to leverage them to find better information. We'll cover those soon. Regardless, if we apply what we'll learn in this class, we'll become that much more accomplished.

In short, we are going to learn a bit more about what's hidden from most users and from the common view. To do that, we needed to understand the difference between information sources and information resources. Information sources can be divided into three types: primary, secondary, and tertiary. The differences among them center around the strength of the evidence they provide and their proximity to their topic. However, when searching for information, it is often better to begin with tertiary and secondary sources. In the end, an information source is the main item we often aim to acquire.

An information resource is a tool that provides or supplies access to information sources. In this section, some examples of information resources are the library discovery service, a search engine, and databases like JSTOR, ASU, or ProQuest. These are all tertiary sources that can lead us to more substantial sources of primary and secondary information.

Reference Managers: Getting Started

Readings

Please visit the links in this section as you read through it, and read through the Zotero Documentation, especially after watching the accompanying software demonstration.

Introduction

Now that I have introduced you to some of the different resources for information sources that exist (we will learn about more in Chapter 5), our next personal knowledge management challenge involves managing the information sources that we locate and want to use.

Imagine, for example, that we need to locate and use three academic sources for a class paper assignment. We could, like probably many do, search for information on the fly, locate some journal articles, figure out how to cite the articles, and manually add the citations to our Word documents. Or, perhaps if we're a little more advanced, we could download the papers to our computers and store them in the same folder where a draft of our paper assignment is stored. Although that might be a bit more organized, let's recognize that it is the 21st century and that we have these computers that can do so much more for us than act as simple file cabinets. Basically, if we want to be more efficient with our workflows, then this is not the way.

To be more efficient, we can use a reference manager (RM) (also called a citation manager). A RM is a piece of software that helps us manage this process of saving, collecting, and using information sources. Although generally aimed at academic users, RMs can also be used to bookmark, save, and collect all sorts of web and print information sources and for all sorts of outcomes, whether those outcomes are class papers, engineering projects, musical composition projects, biology experiments, and so on. Basically, when we use the appropriate tool to collect and use information, then this is the way.

Reference Manager Software

You are required to use a RM in this course, and I will focus on the Zotero RM. However, you are free to choose other options, even if I don't cover them, and there are many. Wikipedia has a page that lists around 20 RM options.

There are a few reasons I will focus on Zotero, though. First, I use it, and I know it fairly well, although I'm always learning new things about it. But most importantly, it's free (and open source software), it's consistently maintained and updated, it provides all the major functions that a RM should provide and more, and it's available on Windows, macOS (desktop and laptop computers), iOS, and Android (Zotero app).

If you elect to use an alternate RM, be aware that they are not all created equal. The two most popular, functional, and well-supported alternatives are Mendeley and EndNote. Both of these are more academic-centric, while Zotero is more agnostic about source information and usage.

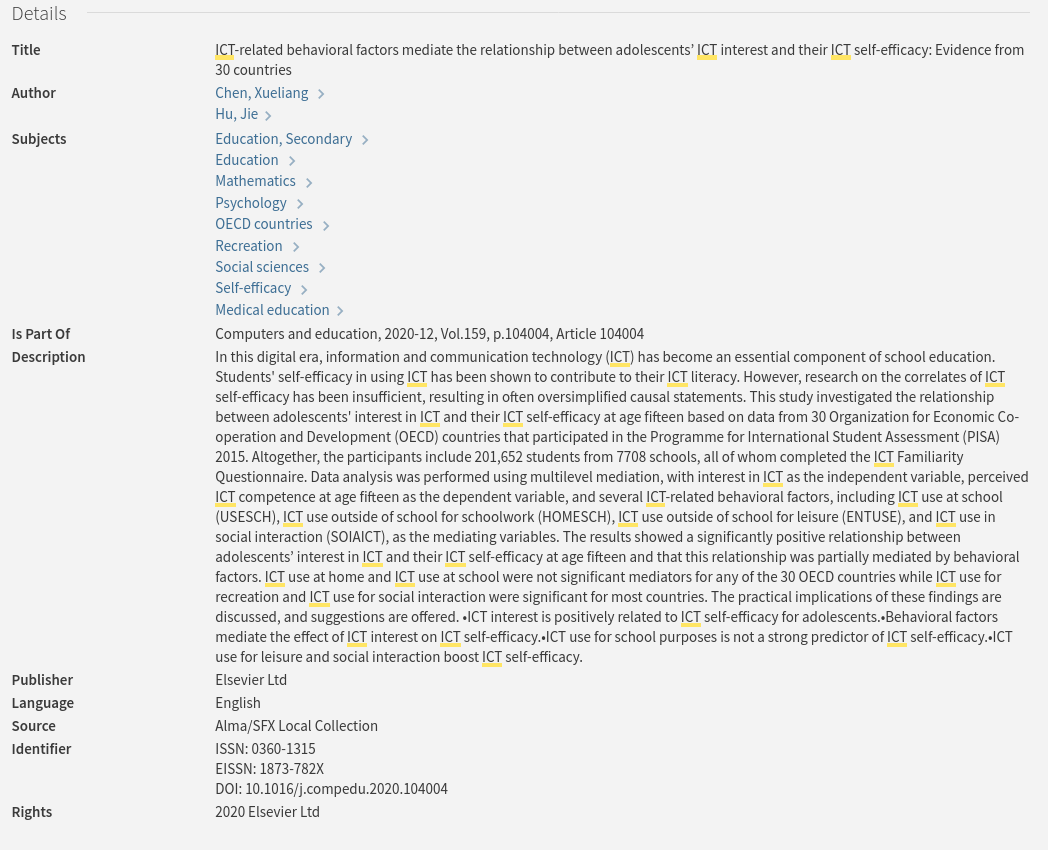

Mendeley and EndNote are more academically oriented because they are owned by companies involved with academic publishing and academic databases. Mendeley is owned by the company Elsevier, which provides the Scopus database, a bibliographic, abstract, and citation database, and publishes multiple journal titles. EndNote is owned by Clarivate, a research analytics company, which provides the Web of Science and ProQuest databases. Web of Science is a citation database like Scopus.

Zotero

Gilmour & Cobus-Kuo (2011) identify eight functions that a RM should provide, and Zotero performs all of these functions. These functions include:

- Import citations from bibliographic databases and websites

- Gather metadata from PDF files

- Allow organization of citations w/in the reference manager database

- Allow annotations of citations

- Allow sharing of the reference management database

- Allow data interchange with other reference manager products through standard metadata formats

- Produce formatted citations in a variety of styles

- Work with word processing software to facilitate in-text citation (Gilmour & Cobus-Kuo, 2011, Introduction section).

Regardless of which RM we use, we want to pick one that performs most if not all of the above functions. The Zotero Quick Start Guide provides a nice overview of the basic functions, how to use those functions, and the documentation describes how to do more. Fortunately, Zotero satisfies the requirements listed above.

The Zotero Quick Start Guide shows us how to:

- Install and open the Zotero desktop/laptop application

- Install and use the Zotero browser plugin

- The download page provides links for using Zotero with:

- Firefox

- Edge

- Chrome

- Safari

- The download page provides links for using Zotero with:

- How to collect items (e.g., books, articles, images, etc.)

- What we can do with those items

- How to create collections to organize items by topic, project, etc

- How to use tags to add additional organizational layers

- How to search, and save your searches, your Zotero library

- How to import or add attachments (like PDF copies of your items)

- How to add notes to your items

- How to cite items in your papers, etc.

- How to use Zotero with Microsoft Word, Google Docs, or other word processors

- The word processor plugin provides links for using Zotero with Microsoft Word, LibreOffice Writer, and Google Docs

- How to create bibliographies

- How to use Zotero and access your Zotero library on multiple devices

- How to collaborate on research projects using Zotero

Note: If you choose Zotero, as I suggest and recommend, you should create a free account (use your personal email to sign up). As you add material to your Zotero collection, you collection will be synced with and backed up to Zotero's servers. Zotero registration: https://www.zotero.org/user/register/

Zotero Demo

In the demonstration video, I will show you how to complete the above steps so that you may get started using Zotero. Again, you may use an alternate RM, but throughout this course, I will demonstrate Zotero.

Your task this week is to download and start using Zotero, or some alternate RM. Please follow the demo video to complete the process.

Conclusion

- Reference (or citation) managers (RM) provide more sophisticated tools to manage information sources than simple files systems provide.

- There are a slew of RM applications available to use, but we want to be sure we pick one that provides as many functions as possible, that is available on as many devices and operating systems as possible, and that can be integrated with a variety of word processing applications.

- Although you are welcome to use an alternate RM, in this course we focus on the Zotero.

References (created with Zotero)

Gilmour, R., & Cobus-Kuo, L. (2011). Reference management software: A comparative analysis of four products. Issues in Science and Technology Librarianship, 66(Summer 2011). https://doi.org/10.5062/F4Z60KZF

Welcome to Retrieving and Evaluating Information

Welcome to the chapter on retrieving and evaluation information. In this chapter, we'll practice our skills in searching and assessing the information that's available to us through two types of information resources: the web and the academic library.

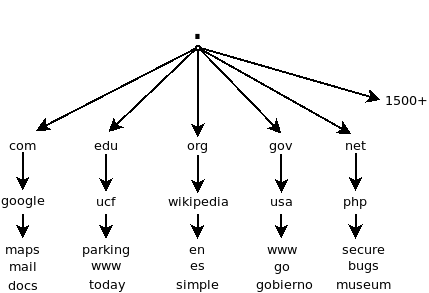

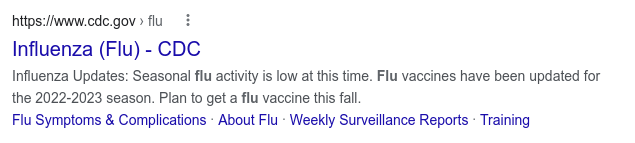

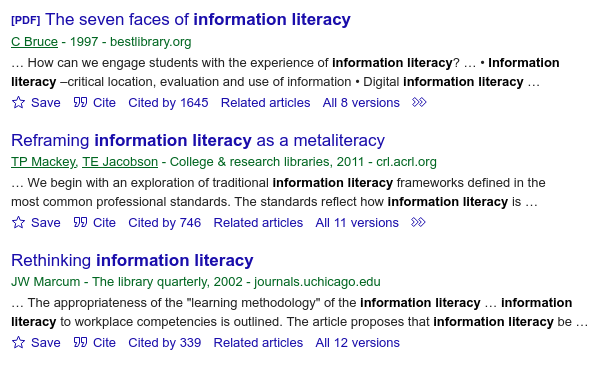

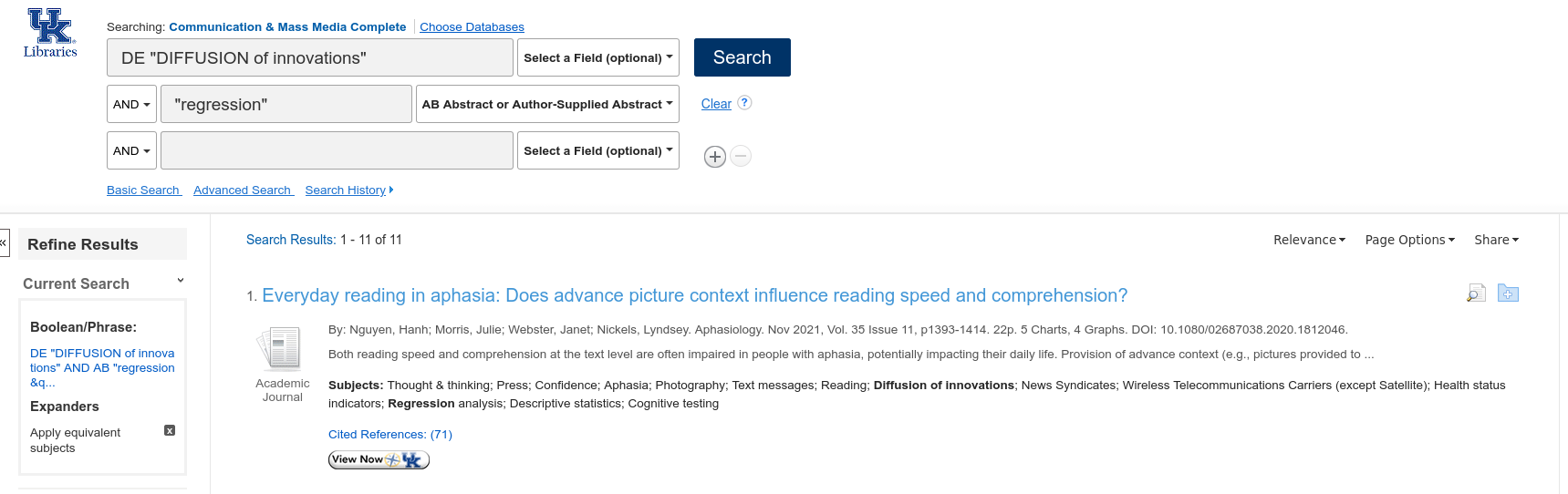

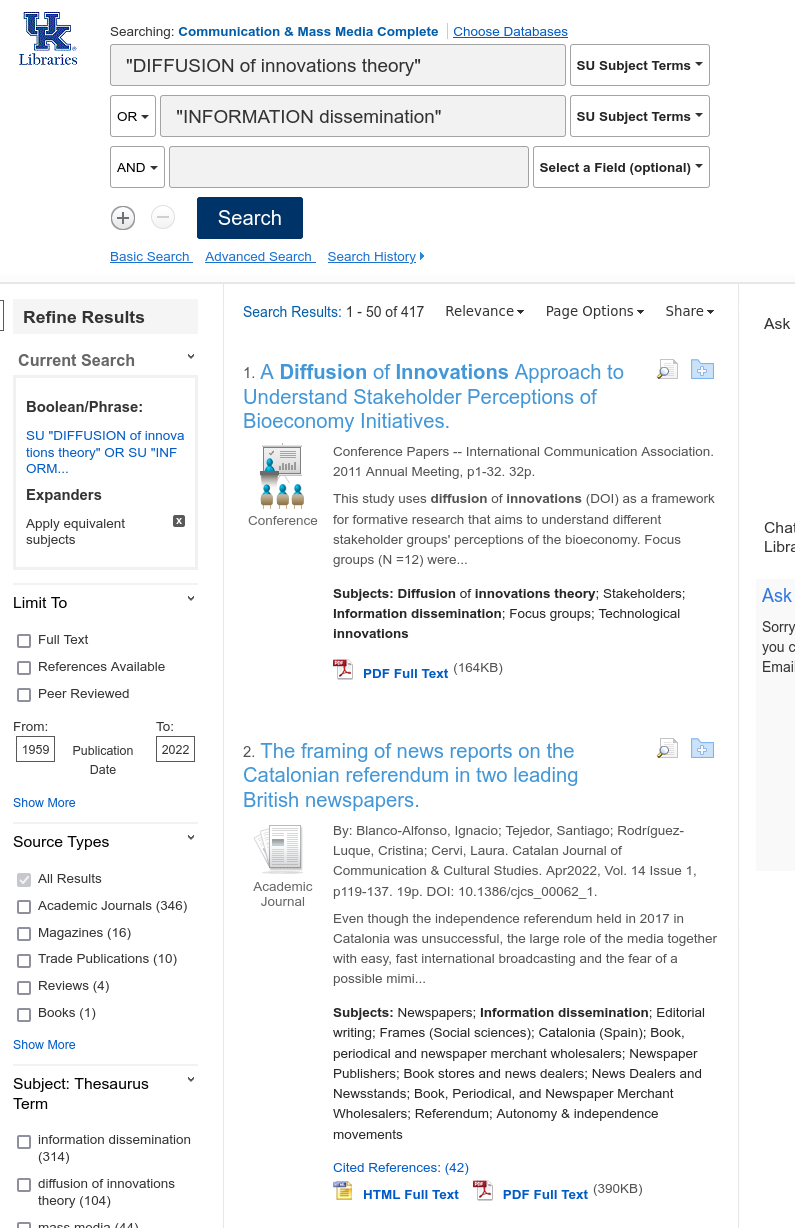

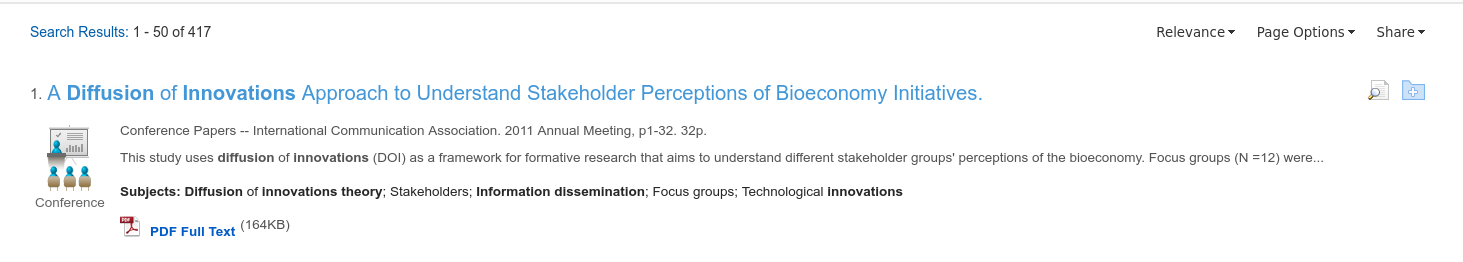

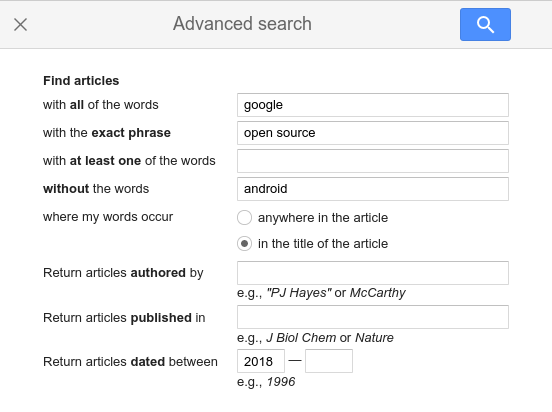

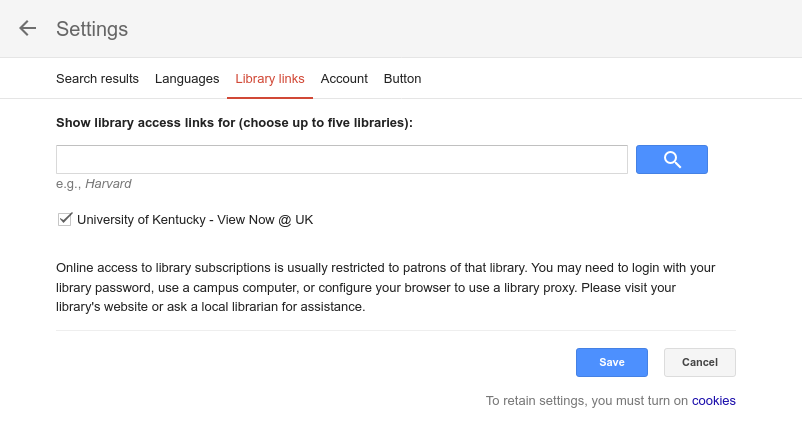

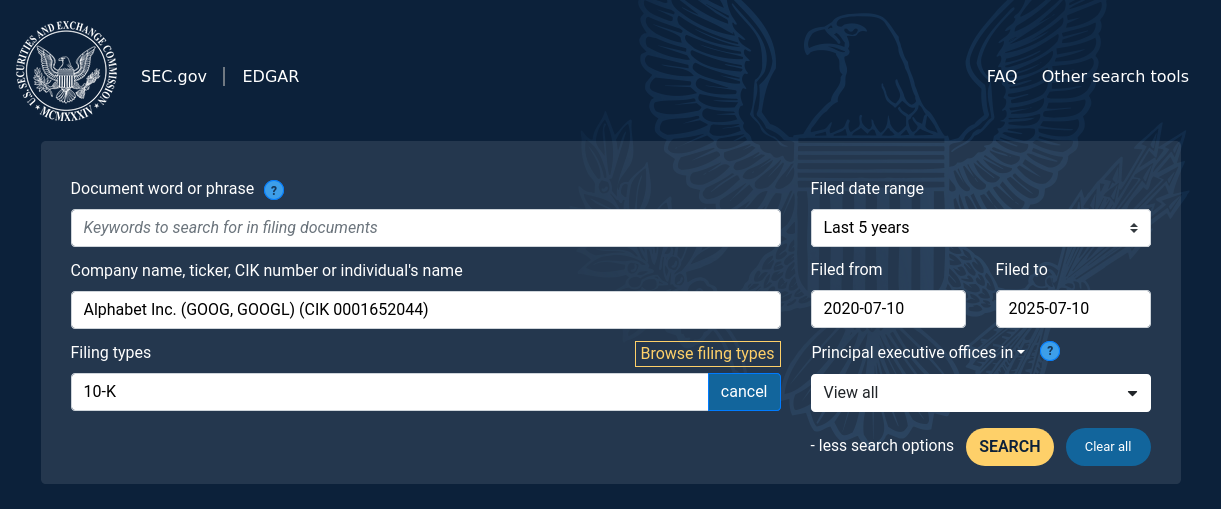

In our first section on Web Information Retrieval, we'll learn how the internet/web is organized and how we can use this organization to our advantage. Specifically, knowing how the internet/web is organized will aid us in creating effective search queries and sessions, where effective means finding the right information quickly and efficiently.